-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

Hirota’s Hypoxic Fraud sanctioned in Japan

Kiichi Hirota has been found guilty of research fraud in Japan. He was previously involved in Gregg Semenza's Nobel Prize discovery of HIF gene. Semenza has 15 retractions, and the work of his Nobel co-recipients isn't entirely kosher either.in For Better Science on 2026-04-21 05:00:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

Autism experts venture to set the narrative for INSAR, and more

Here is a roundup of autism-related news and research spotted around the web for the week of 20 April.in The Transmitter on 2026-04-21 04:00:17 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

Frameshift: How Mia Thomaidou tapped a fellowship to connect neuroscience to criminal justice

As a fellow at the Dana Foundation, she merged two familiar passions and discovered a new one: science philanthropy.in The Transmitter on 2026-04-21 04:00:04 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

Trump’s order on psychedelics could have far-reaching science consequences

A new executive order could make it easier for researchers studying how psychedelic drugs such as psilocybin, LSD and ibogaine may be useful in medicine

in Scientific American on 2026-04-20 20:15:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

NASA’s 2028 moon landing may be delayed because of lack of space suits, watchdog report warns

NASA needs new space suits to land astronauts on the moon by 2028, but development is behind and in danger of slipping further, according to a report from the agency’s Office of Inspector General

in Scientific American on 2026-04-20 19:22:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

Astronauts’ brains don’t fully adapt to life in microgravity, new study finds

New research shows astronauts tend to grip objects in microgravity as if they felt as heavy as or heavier than they would on Earth, a finding that could help future space exploration

in Scientific American on 2026-04-20 17:40:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

Increasing heat can boost malnutrition among children

In a study of 6.5 million children in Brazil, higher temperatures were associated with worse nutrition outcomes, especially in vulnerable groups.in Science News: Health & Medicine on 2026-04-20 17:00:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

Major pharmacology journals flag another 15 papers by scientist facing criminal probe

A leading pharmacologist in Italy accused of embezzling research funds is now the subject of coordinated editorial action by one of the field’s professional societies.

The American Society for Pharmacology and Experimental Therapeutics announced expressions of concern for 12 papers, corrections for two and a retraction in an editorial published April 3 in The Journal of Pharmacology and Experimental Therapeutics and Molecular Pharmacology. Salvatore Cuzzocrea, a pharmacology professor at the University of Messina, was a coauthor or corresponding author on all the papers. As we reported previously, Cuzzocrea is being investigated in Italy for allegedly embezzling more than 2 million euros in research reimbursements and allegedly rigging university contracts.

Since our reporting on Cuzzocrea a year ago, journals have retracted five more of his papers. One, from BMC Neuroscience, was retracted 10 days after our reporting for containing data that appeared in an earlier publication. A different paper was retracted last year from Biology for containing overlapping images, another from Biomedicine & Pharmacotherapy for image overlaps, and the International Journal of Molecular Science retracted two more this year for containing duplications and “inappropriate editing” of micrographs.

The latest action from ASPET brings Cuzzocrea’s total to 25 retractions, per our database. The announcement follows an independent analysis by the ethics editor of the society, who found duplicated and altered western blots, poor image quality, and datasets without enough information on how they were collected and analyzed.

Cuzzocrea has not responded to our requests for comment.

The retraction was of a 2010 paper, published in The Journal of Pharmacology and Experimental Therapeutics, for containing western blot data that were reused a year later in the now retracted paper in BMC Neuroscience. The retraction notice states the duplication wasn’t disclosed, and the authors couldn’t explain why it had happened. Cuzzocrea is the corresponding author on both papers.

In several of the cases flagged by ASPET, the authors said they could no longer retrieve the original data because of the time passed since publication; the papers in question were published between 2002 and 2022. Many of these articles fall outside of ASPET’s and the Committee on Publications Ethics’ guidelines for data retention, the editorial stated, but nevertheless the editors wrote that image integrity concerns “require satisfactory explanation and resolution regardless of their date of publication.”

Cuzzocrea acknowledged that all the data flagged for image integrity issues had originated in his lab, according to ASPET’s editorial, and the ASPET editors wrote that he and his coauthors had “ample opportunity” to supply original data and explanations for the discrepancies but were unable to address the concerns.

A spokesperson for the University of Messina told us, “Within the Italian system, universities are not generally responsible for assessing the scientific publications of their academic staff, except for matters that fall within their institutional remit and in compliance with the applicable regulations.”

When we spoke to Cuzzocrea last year about previous retractions, he told us retracted papers were a small fraction of his overall publication record. Finding errors and duplications made by other members of his lab was harder 20 years ago, without the help of artificial intelligence, he added.

The new corrections, issued to two papers in The Journal of Pharmacology and Experimental Therapeutics, involved a labelling error and an overexposed western blot. The 12 expressions of concern were given to “alert readers to the specific image issues,” which also include problematic western blots and photomicrographs.

“These editorial decisions are required to correct the scientific record, irrespective of the relative impact of the identified image issues on the authors’ conclusions contained in these individual articles,” the editors wrote.

Cuzzocrea remains a professor at the University of Messina. He is facing criminal trial for allegedly awarding university contracts without having a bidding process. According to reports, authorities have seized more than 2.5 million euros, about US$2.9 million, of his assets and allege he misused research funds between 2019 and 2023. Italian media reported that Cuzzocrea had used the research funds for personal travel and expanding his equestrian facility — spending the money on breeding horses and building stables, according to a 700-page court document.

Despite the ongoing investigations, he still remains active in the academic community. Portuguese news reports he is a candidate to lead the University of Porto. That university did not respond to our request for comment. Back in Italy, he has reportedly requested a transfer to other universities and a sabbatical year from the University of Messina.

Like Retraction Watch? You can make a tax-deductible contribution to support our work, follow us on X or Bluesky, like us on Facebook, follow us on LinkedIn, add us to your RSS reader, or subscribe to our daily digest. If you find a retraction that’s not in our database, you can let us know here. For comments or feedback, email us at team@retractionwatch.com.

in Retraction watch on 2026-04-20 16:27:27 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

Risk of ‘megaquake’ in Japan higher after powerful earthquake strikes

After a magnitude 7.7 earthquake struck of the coast of Japan and set off tsunami warnings, there’s an elevated risk of a “megaquake” following in its wake

in Scientific American on 2026-04-20 16:21:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

NASA’s Voyager 1 spacecraft down to just two working science instruments

This iconic spacecraft launched nearly 49 years ago and is running perilously low on power

in Scientific American on 2026-04-20 15:48:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

The strange way cocaine water pollution is changing salmon

It turns out that salmon exposed to cocaine through water pollution do a lot of swimming—which may not be a good thing

in Scientific American on 2026-04-20 15:00:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

See Bruce the parrot wield his broken beak like a deadly weapon

Bruce the Kea parrot is missing the upper half of his beak, but he has turned this disability into a weapon to keep subordinates in line

in Scientific American on 2026-04-20 15:00:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

Episode 331 - Robert Froemke, PhD

On April 16, 2026, we met with Dr. Robert Froemke of New York University to discuss his work on parenting and co-parenting in mice. We covered a wide range of topics including his video approach to discovery in behavioral experiments and the role of oxytocin in parental behavior.

Guest:

Robert Froemke, Professor, Department of Otolaryngology and Department of Neuroscience at at New York University Langone Health.

Participating:

Alfonso Apicella, Department of Neuroscience, Developmental and Regenerative Biology, UT San Antonio

Alice Bertero, Department of Neuroscience, Developmental and Regenerative Biology, UT San Antonio

Jon Moler, Department of Neuroscience, Developmental and Regenerative Biology, UT San Antonio

Host:

Charles Wilson, Department of Neuroscience, Developmental and Regenerative Biology, UT San Antonio

in Neuroscientists talk shop on 2026-04-20 15:00:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

Magnetic muon measurements and gene-therapy advances win $3 million Breakthrough prizes

This year’s winners include hundreds of physicists across more than 30 institutions

in Scientific American on 2026-04-20 14:22:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

The Science of Manifestation, is there really evidence to support it?

Manifestation is everywhere right now. Can visualising success really change your life? Or is this just repackaged wishful thinking?in Women in Neuroscience UK on 2026-04-20 14:02:28 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

A vaccine for Lyme disease could be on the horizon

The vaccine candidate is the furthest any shot has gotten since the last one was pulled in 2002. Scientists are testing other ways to block infection.in Science News: Health & Medicine on 2026-04-20 13:00:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

Making waves: water simulation of famous quantum effect reveals unexpected patterns

A new study reveals how a spinning vortex causes system-wide, counter-rotating wave patterns, mimicking effects that occur, but cannot be seen, in the quantum realm.in OIST Japan on 2026-04-20 12:00:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

Ancient Roman ‘machine-gun’ damage discovered on Pompeii walls

Recently uncovered damage to walls in Pompeii displays patterns that may have been made by an ancient “machine gun” called a polybolos

in Scientific American on 2026-04-20 11:00:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

‘Cocaine hippos’ raise tough questions, and scientists uncover insights on faster aging and heart risks

“Cocaine hippos,” underground bees, and fresh insights into aging and heart health

in Scientific American on 2026-04-20 10:00:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

See the spectacular Lyrid meteor shower at its peak

The Lyrid meteor shower hits its peak from April 21 to April 22. Here’s everything you need to know about this annual celestial light show

in Scientific American on 2026-04-20 10:00:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

To understand decision-making, we need to truly challenge lab animals

Complex, multidimensional tasks that unfold over time could reveal how different brain areas work together to support decisions.in The Transmitter on 2026-04-20 04:00:21 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

Why game theory could be critical in a nuclear war

Military strategists use game theory to evaluate possible strategies—but there are limits to what this approach to decision-making can achieve

in Scientific American on 2026-04-19 12:00:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

How a Renaissance gambling dispute spawned probability theory

A dispute over how to divvy up the pot in an interrupted game of chance led early mathematicians to invent modern risk assessment

in Scientific American on 2026-04-19 11:00:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

10 years ago, Elisabeth Bik published a preprint heard around the world

Elisabeth Bik If you are at all familiar with scientific sleuthing, you’re familiar with Elisabeth Bik. She is quoted so often in the mainstream media it is probably difficult to imagine a time before her supersense for spotting similarities in images wasn’t making headlines.

But it was 10 years ago, on April 19, 2016, when she made her debut, when we covered her work screening more than 20,000 biomedical research papers containing western blots. She and coauthors Ferric Fang – a member of the board of directors of our parent nonprofit organization, The Center for Scientific Integrity, and a professor at the University of Washington in Seattle – and Arturo Casadevall, of the Johns Hopkins School of Medicine in Baltimore, posted the work as a preprint on bioRxiv.org and it appeared two months later in mBio.

The preprint was a shot across the bow for journals and publishers, and in the decade since, Bik has advised and mentored others doing similar work. In 2024, she won the Einstein Foundation Award for “identifying misconduct and potential fraud in scientific publications, highlighting science’s problems policing itself.” She donated the proceeds to The Center for Scientific Integrity to create a fund to help other sleuths do their work.

Bik spoke with us earlier this month about the paper, sleuthing and more. The conversation has been edited for clarity and brevity.

Retraction Watch: We first interviewed you in 2016 just as you and your coauthors posted your review of western blots in 20,621 papers. Ten years later, do you know what has happened with those papers?

Elisabeth Bik: Well, not all 20,000, but the 800 or so papers that I found problems in, yes. Of the 782, 177 have been retracted, 42 have an expression of concern and 256 have been corrected. And if I count all three of them, that’s 475, so 60%.

RW: Do you think that should be 100%?

Bik: Yeah, I would have loved it to be a little bit closer to 100%. You can see papers are still being corrected. Like this paper, for example, was retracted in 2024, but I reported it in 2015. [On Zoom, Bik was pointing at the spreadsheet she uses to track papers.] Most of these were reported to the journals in 2015.

RW: What did people think of your paper?

Bik: It was rejected four or five times. In the end, we were like, we’ll just put it as a preprint and do an interview with Retraction Watch.

Nobody believed this paper. People didn’t believe I scanned 20,000 papers over a period of maybe roughly, I would say a year or two. I did a count on how much time I used to scan one paper. And it was about one minute per paper. Really, I’m not reading a paper, I’m just looking at the images. We took it out in the end because so many people were like, that’s impossible. I’m proud of it, but that’s apparently the point that breaks everybody.

People also wanted to know, ‘what is your false-positive and false-negative rates’? We weren’t quite sure. There’s no real gold standard for it. Like what is standard for image duplication? I was the first to raise this. So it’s hard to have to test it against another test. And I also don’t know how many papers I missed. I think we were more worried about claiming a positive where it wasn’t a positive. So that’s why my two coauthors were incredibly helpful. But I know I must have missed a lot of these problems.

RW: But 782 out of 20,000 is not nothing.

Bik: Yeah, it’s 4%, or 1 in 25.

RW: You’re known for finding duplications and manipulations in images, but you started out scrutinizing papers for plagiarism.

Bik: That is how it all started. I found that somebody had plagiarized my work. And I worked on plagiarism for nine months or so. And then I came across a Ph.D. thesis that had not only plagiarized text in the introduction, but also a duplicated image that my eye was drawn to. And that evening, I was thinking, wait, that happens? Maybe I should open a couple of PLOS One papers. And I found a couple already that evening. Otherwise, I would not have been talking to you today. Looking back, it’s one of those little moments that change your career.

RW: You had a recent correction to a paper you coauthored.

Bik: All my papers have been criticized, scrutinized. In a way, it’s fair. I criticize others, people can criticize me. In that paper there was a splicing where we left out a group, and you could see a remnant of a line. It wasn’t like we were trying to change the results or anything. But we corrected it. We found a lot of the original data and we worked with the journal to correct it.

All my papers have been torn apart for the weirdest reasons. You have to put so much work into addressing these things. In a way, it’s fair to be criticized, but I do feel sorry for my coauthors who are dragged into these long discussions.

RW: Do you still scan papers by eye or are you mostly using software?

Bik: Both. Sometimes I see the problem right away, and then I run it through Imagetwin and Proofig. Especially duplications between papers is something I’m not good at, because I cannot remember a million other papers, but the software can. Now you scan these papers and it finds, look, that blot has been used in that other paper, but it’s flipped and it’s representing a different protein. And so it’s the same photo, it’s just flipped and resized a bit. It’s very clear once you compare it, but I would never be able to remember all these blots and all these papers and see these patterns. So we’re finding more of these problems with these software tools that have these libraries of images.

RW: You, and many others – including Retraction Watch – have been accused of targeted attacks in post-publication peer review on social media. What effect does that have on your work?

Bik: It worries me a bit, especially when they tag my family. I’m always a bit worried about personal safety. Sometimes the critics will send emails to the host of an event I’m speaking at and say that I’m fraudulent. You have to say to the organizers, I’m very sorry you’re bothered by my enemies. And then, there’s talk about it. What should we do? Should we respond? Should we not respond? Emails have to be sent to all these dozens of people to not respond. It’s just a lot of work for everybody involved. And I feel so sorry that comes on top of organizing a conference, which already is a lot of work. On the other hand, I think it’s good that they see my work does result in personal criticism.

RW: Sleuths have become an essential part of the whole research integrity ecosystem. How has that changed in the last 10 years?

Bik: I think it’s wonderful to have this growing community because this work, at least the way I do it, is very by myself, which I like. I’m a super-introvert. I don’t really work well with other people. I like to be loosely connected to a community. We’re all sort of a bunch of misfits. I love to be independent. Then there’s other communities who are meta scientists. And people working at publishers doing this work are also wonderful people. And I think all the noses are sort of starting to point in the same direction, which is lovely. It’s becoming part of what science should be. But you have to start in a way that upsets a lot of people and makes people uncomfortable.

There’s still a lot of room to grow. I think we all agree on that. If you buy a car and the airbag is not good, there should be a recall, right? It should be better. Moving forward, all the cars should have better airbags or better wheels that don’t fall off. If we buy a product, we should be able to complain about it. There should be quality control and there should be customer service. And I think that was a bit lacking in the scientific publishing world. And both of these things are getting better. We are growing towards each other and learning from each other.

RW: One of the criticisms we’re seeing as a result of some of the big misconduct cases is the belief that they mean we can’t trust science. What do you say to that?

Bik: I end most of my talks with this exact point. I’m talking about that one rotten apple in the fruit basket. I love science and I do this to make science better. Maybe I’m considered a vigilante because I point out the bad stuff, but it doesn’t mean that we cannot trust science. We should just do a little bit better in screening before we publish things. We should be critical. And I feel we can all agree on that.

But it has been used, weaponized, in the misinformation era where people say, all science is fraudulent, that you cannot trust any science paper. I think that is the wrong attitude, but it’s the double-edged sword we’re working with.

It’s very easy to draw that conclusion, but that is the wrong conclusion. We need science.

Like Retraction Watch? You can make a tax-deductible contribution to support our work, follow us on X or Bluesky, like us on Facebook, follow us on LinkedIn, add us to your RSS reader, or subscribe to our daily digest. If you find a retraction that’s not in our database, you can let us know here. For comments or feedback, email us at team@retractionwatch.com.

in Retraction watch on 2026-04-19 10:00:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

Master of chaos wins $3-million math prize for ‘blowing up’ equations

For decades, mathematician Frank Merle has been embracing the messy math behind lasers and fluids

in Scientific American on 2026-04-18 23:00:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

The science behind the peptide craze

The world of peptides has exploded in wellness circles, but the benefits of injecting these gray-market molecules rest on little clinical evidence

in Scientific American on 2026-04-18 12:00:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

NSF awards record number of coveted Ph.D. fellowships in surprise move

Quantum science and AI research are big winners just a year after this U.S. funding giant slashed its Graduate Research Fellowship Program awards in half

in Scientific American on 2026-04-18 11:30:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

Weekend reads: An alternative to the impact factor in China; the clinical trials of six ‘superretractors’; Retraction Watch goes to Capitol Hill

If your week flew by — we know ours did — catch up here with what you might have missed.

The week at Retraction Watch featured:

- Scientist who alleged COVID cover-up circulated a faked NIH email, agency says

- BMJ retracts most of a special issue for ‘compromised’ peer review and ‘improbable device use’

- ‘Game-changer’ breast cancer study retracted as Indiana researcher out of his post

- Retraction Watch testifies in Congressional hearing on scientific publishing. Coverage of the hearing in Nature and Inside Higher Ed.

- 45 editors resign from math journal, former EIC calls Elsevier publisher a ‘mini-dictator’

In case you missed the news, the Hijacked Journal Checker now has more than 400 entries. The Retraction Watch Database has over 64,000 retractions. Our list of COVID-19 retractions is up to 650, and our mass resignations list has more than 50 entries. We keep tabs on all this and more. If you value this work, please consider showing your support with a tax-deductible donation. Every dollar counts.

Here’s what was happening elsewhere (some of these items may be paywalled, metered access, or require free registration to read):

- “China proposes a new way to measure academic influence in a departure from impact factor.”

- “Six ‘Superretractors’ Responsible for Large Number of Retracted Clinical Trials,” study finds.

- A cancer research pioneer with five retractions a decade ago earns an expression of concern for a Nature paper.

- “Can journals that pay peer reviewers succeed?”

- “China shifts research funding focus away from journal fees.”

- Study of PLOS journals finds articles with open peer review “much less likely” to be retracted.

- Researchers look at retractions in the orthodontic literature.”Where Does Publishing’s A.I. Problem Leave Authors and Readers?”

- “When Authorship Goes Wrong: Why SIGCHI Conferences Freeze Author Lists and What That Means.”

- “Citations of Retracted Ophthalmology Papers Persist, Study Warns.”

- “Academic fraud may be the symptom of a much more systemic problem.”

- Korean education ministry audits Kookmin University over governance and former first lady.

- “Frontiers issues AI guidance spanning full publishing lifecycle.”

- “I’m much less sure about where to submit my papers than I used to be,” writes ecologist.

- “Discoverability matters: Open access models and the translation of science into patents.”

- “Dozens of AI disease-prediction models were trained on dubious data,” finds preprint.

- The U.K. Research Excellence Framework’s “experiment with research culture was always doomed.”

- Researcher points out a university awarded a thesis that cited a retracted study to attribute “anti-cancer properties to a plant.”

- “Citation self-awareness for a fairer academic publishing landscape.”

- “China discontinues prominent journal ranking list.”

- “Authorship, Accountability, and the Erosion of Scientific Publishing.”

- “Adopting a united front against paper mills“: On international stakeholder group United2Act Against Paper Mills.

- “Questionable Research Practices: A Principled Classification and Ranking Based on Survey Data.”

- “Flawed study on the antidepressant Paxil came with a cautionary note — if you knew how to find it.” A judge tossed the lawsuit on the study earlier this month.

- “Mouse neurobehaviorist by day; research integrity watchdog by night.”

Upcoming talk

- National Academies “Workshop on Enhancing Scientific Integrity Progress and Opportunities in the Social and Behavioral Sciences” featuring our Ivan Oransky (April 24, virtual)

Like Retraction Watch? You can make a tax-deductible contribution to support our work, follow us on X or Bluesky, like us on Facebook, follow us on LinkedIn, add us to your RSS reader, or subscribe to our daily digest. If you find a retraction that’s not in our database, you can let us know here. For comments or feedback, email us at team@retractionwatch.com.

in Retraction watch on 2026-04-18 10:00:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

Did AI just solve the mystery of one of El Greco’s most enigmatic paintings?

For years, art historians believed The Baptism of Christ was likely painted by El Greco with assistance from other artists. But new research suggests otherwise

in Scientific American on 2026-04-17 18:00:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

How to invent a realistic language for fictional speakers

Linguists can mix, match or even break the rules of real-world languages to create interesting imaginary ones.in Science News: Science & Society on 2026-04-17 16:00:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

Songbirds reveal the dark side of making new brain cells as adults

A new study in songbirds might help explain why humans don’t generate many new brain cells, called neurons, as adults

in Scientific American on 2026-04-17 15:00:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

What’s the weirdest planet in the solar system?

All the sun’s planets are oddballs. But some are more so than others

in Scientific American on 2026-04-17 14:30:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

What is Mythos and why are experts worried about Anthropic’s AI model

The company says Mythos is too dangerous to release publicly. Cybersecurity experts agree the model's capabilities matter, but not all of them are buying the most alarming claims

in Scientific American on 2026-04-17 14:30:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

How your body and brain construct chronic pain

Author Rachel Zoffness breaks down why we have chronic pain and how science shows that it’s all in our head

in Scientific American on 2026-04-17 13:00:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

Know the legal age to buy tobacco products in the U.S.? Many parents don’t

A study finds that less than half of surveyed parents know the legal age, 21, to buy cigarettes, vapes, nicotine pouches and other tobacco products.in Science News: Health & Medicine on 2026-04-17 12:00:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

AI music is reviving the same fights that shaped the player piano

As AI songs get harder to tell apart from human-made music, an older technology offers a revealing preview of the fight over artistry, labor and pay

in Scientific American on 2026-04-17 11:00:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

Mars orbiter watches mysterious wave of darkness spread across red planet’s surface

Observations by the Mars Express orbiter reveal rapid changes on the Red Planet’s surface from windblown volcanic ash

in Scientific American on 2026-04-17 11:00:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

Why birds were the only dinosaurs to survive Earth’s worst day

How a few unique traits helped modern-style birds—the last living dinosaurs—survive the asteroid apocalypse that took out T. rex and other mighty beasts

in Scientific American on 2026-04-17 10:00:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

Schneider Shorts 17.04.2026 – Saving the planet never tasted this good

Schneider Shorts 17.04.2026 - an anti-aging clinical trial with bonus eye-cancer, a papermiller turns to full-time mushrooming, an artist-scientist educates a youngster, German and Canadian cancer researchers unconcerned, retractions in Italy and Poland, Springer Nature changes history, and finally, a shrimp virus turns people blind!in For Better Science on 2026-04-17 05:00:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

‘Overdue’ debate unfurls over neuroimaging method

After a January paper questioned the validity of an approach called lesion network mapping, its users are pressure testing their results.in The Transmitter on 2026-04-17 04:00:16 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

Former deputy surgeon general Erica Schwartz nominated as new CDC chief

The White House has nominated Erica Schwartz to replace NIH director Jay Bhattacharya as CDC chief. Bhattacharya has been leading the CDC on an acting basis since February, after the public health agency’s director was fired in 2025

in Scientific American on 2026-04-16 21:15:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

NASA Artemis II astronauts say thank you to the world

Astronauts Reid Wiseman, Victor Glover, Christina Koch and Jeremy Hansen reflected on the highs and lows of their moon mission—the first of its kind in more than 50 years

in Scientific American on 2026-04-16 20:00:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

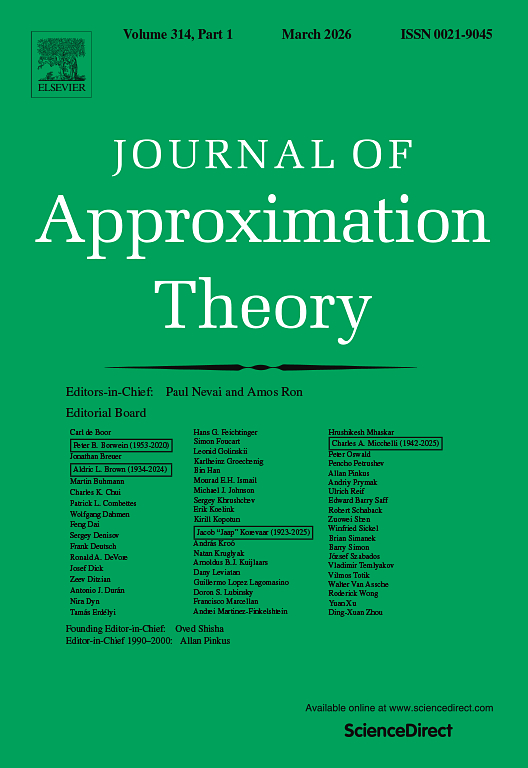

45 editors resign from math journal, former EIC calls Elsevier publisher a ‘mini-dictator’

Forty-five of 48 members of the editorial board of the Journal of Approximation Theory resigned earlier this month for what they called Elsevier’s “concerning and potentially detrimental” decisions regarding the publication.

Paul Nevai, formerly a professor at The Ohio State University, was appointed editor-in-chief of JAT in 1990 and held the position for 35 years until December. That’s when he reached the end of his term and Elsevier informed him they’d be filling the position with someone else.

The mass resignation came after what Nevai said were several years of bad blood between the editors of the journal (including him) and the publisher, Giampiero Accardo. A representative for Elsevier told us designated publishers like Accardo are Elsevier employees who “oversee a portfolio of academic journals within a subject area, working closely with editors, authors, and research communities to support their development and long-term success.”

An April 3 email signed by 45 editors and both former editors-in-chief states: “While the publisher may seek to continue the journal under its existing name, in our view, the journal as we have known it has effectively ceased to exist.”

The journal was founded in 1968 and published by Academic Press until it was acquired by Elsevier in 2001.

Elsevier “made a series of decisions that a substantial majority of the editors found deeply concerning and potentially detrimental to the journal’s future,” the group resignation letter reads. “Despite efforts to address these concerns through discussions with the publisher, a mutually satisfactory resolution could not be reached.”

The letter doesn’t explicitly detail which decisions Elsevier made that the editors found problematic. Nevai told us the publisher increased oversight, employed heavy-handed involvement in editorial decisions and attempted to speed up the article production process.

Only three editors remain on the journal’s website. Retraction Watch reached out to them for comment but they did not respond.

“Editorial succession and rotation are important factors in ensuring the long-term health and sustainability of journals; by rotating editors, fresh approaches and perspectives can be brought to the journal and its community, helping to ensure it continues to serve its field effectively and sustainably,” Elsevier’s representative told us.

“We typically manage these transitions in close partnership with existing editors, often involving them in the nomination of their potential successors over a transition period,” they added.

The April 3 resignation wasn’t the first for the journal. Barry Simon, a prominent mathematical physicist, stepped away earlier this year in protest, Nevai said. Simon did not respond to our request for comment.

Nevai told us that, before Accardo took on the role of publisher, “everything was perfect,” and likened the publisher to a “mini-dictator.” Before the change, Nevai said, he and co-editor-in-chief Amos Ron had authority to appoint editors. But Elsevier was focused on expanding the editorial board to include researchers from a wider range of countries, according to Nevai.

Mathematics is a “completely merit based system,” he said, objecting to the move.

Nevai and Ron reached the end of their three-year terms in December. Nevai told us he expected his contract to be renewed and that he would decide when to retire.

Elsevier told us they had proposed a “collaborative process that included a one-year extension to allow for the identification of suitable successors, with input from the Editorial Board and the wider community. We were unfortunately unable to reach agreement on these points.”

Although Nevai told us he worked as an associate editor after the end of his term, the Elsevier spokesperson said there was “no formal agreement or appointment for him to take on an Associate Editor role. His position remained Editor-in-Chief during the discussions and following the conclusion of these discussions in late March, his access to the editorial system was removed.”

Nevai understands himself to have been effectively fired as associate editor at the end of March via an email from journal manager Priyadharsini Muthukumar “reassigning” four articles he had been given to review.

The journal joins our Mass Resignation List and is the second math journal in less than a month to do so. In March, we covered another instance of a mathematics journal’s editorial board who resigned en masse due to editorial changes enforced by Taylor & Francis.

Like Retraction Watch? You can make a tax-deductible contribution to support our work, follow us on X or Bluesky, like us on Facebook, follow us on LinkedIn, add us to your RSS reader, or subscribe to our daily digest. If you find a retraction that’s not in our database, you can let us know here. For comments or feedback, email us at team@retractionwatch.com.

in Retraction watch on 2026-04-16 19:24:16 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

Congress grills RFK, Jr., about vaccines and cuts to health budget

The HHS secretary defended proposed budget cuts to science, his vaccine moves and health care costs on Capitol Hill on Thursday

in Scientific American on 2026-04-16 19:00:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

How the Grand Canyon formed is a surprisingly messy story. Here's the latest clue

A new study suggests a proto–Colorado River filled a large basin before spilling westward to set the Grand Canyon’s modern path

in Scientific American on 2026-04-16 18:00:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

Astronomers just finished the biggest, sharpest 3D map of the universe—and it’s beautiful

A new map of the cosmos, including more than 47 million galaxies and other cosmic objects, represents one of the most extensive surveys of our universe ever conducted

in Scientific American on 2026-04-16 17:00:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

Elizabeth Roboz Einstein—the determined genius behind a multiple sclerosis breakthrough

A Hungarian refugee who came to the U.S. with nothing but a diploma made a breakthrough discovery in the burgeoning field of neurochemistry

in Scientific American on 2026-04-16 16:00:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

10 dinosaur science books recommended by a paleontologist

Steve Brusatte, author of The Rise and Fall of the Dinosaurs and The Story of Birds, recommends 10 dinosaur books to dig into

in Scientific American on 2026-04-16 15:40:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

Secrets of cosmic evolution may lurk in this black hole’s ‘dancing’ jets

A first-of-its-kind observation shows how jets from voracious black holes can shape the growth of galaxies

in Scientific American on 2026-04-16 15:40:00 UTC.

-

- Wallabag.it! - Save to Instapaper - Save to Pocket -

How far from humanity were the astronauts of Artemis II? The answer will surprise you

Artemis II’s crew went farther from humanity than anyone has been before. Here’s how one scientist determined whom, specifically, they were farthest from

in Scientific American on 2026-04-16 14:22:00 UTC.

Feed list

- Brain Science with Ginger Campbell, MD: Neuroscience for Everyone

- Ankur Sinha

- Marco Craveiro

- UH Biocomputation group

- The Official PLOS Blog

- PLOS Neuroscience Community

- The Neurocritic

- Discovery magazine - Neuroskeptic

- Neurorexia

- Neuroscience - TED Blog

- xcorr.net

- The Guardian - Neurophilosophy by Mo Constandi

- Science News: Neuroscience

- Science News: AI

- Science News: Science & Society

- Science News: Health & Medicine

- Science News: Psychology

- OIST Japan

- Brain Byte - The HBP blog

- The Silver Lab

- Scientific American

- Romain Brette

- Retraction watch

- Neural Ensemble News

- Marianne Bezaire

- Forging Connections

- Yourbrainhealth

- Neuroscientists talk shop

- Brain matters the Podcast

- Brain Science with Ginger Campbell, MD: Neuroscience for Everyone

- Brain box

- The Spike

- OUPblog - Psychology and Neuroscience

- For Better Science

- Open and Shut?

- Open Access Tracking Project: news

- Computational Neuroscience

- Pillow Lab

- NeuroFedora blog

- Anna Dumitriu: Bioart and Bacteria

- arXiv.org blog

- Neurdiness: thinking about brains

- Bits of DNA

- Peter Rupprecht

- Malin Sandström's blog

- INCF/OCNS Software Working Group

- Gender Issues in Neuroscience (at Standford University)

- CoCoSys lab

- Massive Science

- Women in Neuroscience UK

- The Transmitter

- Björn Brembs

- BiasWatchNeuro

- Neurofrontiers